We're excited to announce that RINEARN has launched the VCSSL Assistant, an AI that can help you with the VCSSL programming language!

Here's what it can do:

You can access the AI while logged in to ChatGPT via the following page:

However — as we'll explain in more detail later — achieving practical, reliable results currently means it's virtually essential to sign in with a paid ChatGPT account (ChatGPT Plus) and use the "GPT-5 Thinking" model, which performs careful, deep reasoning.

ChatGPT's core capabilities are improving day by day, so it's possible that the free plan will run this assistant smoothly in the future. For now, though, using a free ChatGPT account leads to frequent mistakes, making real-world use difficult. Sorry!

Even with a Plus account and GPT-5 Thinking, it can still:

That's hard to avoid at the moment (especially for more difficult topics). Thanks in advance for your understanding.

We're also planning a separate "Behind the Scenes" post about this AI. For today, we'll focus on the "Overview & How to Use" part.

Alright — let's dive in!

First, let's briefly look back at the background of support AIs at RINEARN.

About a year ago, RINEARN began offering AIs that help users learn and use our software. For example, our most popular freeware, RINEARN Graph 3D, already has an assistant AI that's been working quite well.

If you're wondering "What exactly is a support AI?", we explained the concept in our announcement at the time. Feel free to read it alongside this post:

Since then we've gradually rolled out assistants for other software as well. You can see the current list here:

The lineup is steadily taking shape.

The support AIs above run on ChatGPT's "GPTs" platform and work roughly as follows:

The overall answer quality is largely determined by how well steps 2 and 3 are executed.

Comparing within the same model, we commonly see two issues:

If these two hurdles are too high relative to the AI's capabilities — its depth of reasoning and the amount of text it can consider at once — errors increase and the assistant can become impractical to use.

And yes — because VCSSL is a programming language, building a support AI runs into both challenges A and B as steep hurdles.

First, the documentation is huge (official guides, library references, sample code collections, and more). And to produce code that actually runs, the assistant has to really understand those materials and put the pieces together correctly — like solving a puzzle with strict logic.

As a result, the VCSSL support AI hadn't reached practical quality — it was effectively "on hold," waiting for model advances.

After roughly a year on ice due to these issues, a model that finally seems up to the task launched on ChatGPT this month (August 2025): GPT-5 Thinking.

It combines deep, careful reasoning with solid accuracy and natural phrasing, and it can handle larger amounts of text at once. In short, it's a strong fit for document-heavy, code-oriented work.

We immediately swapped in this new model as the "engine" of the previously shelved VCSSL support assistant and tested it. At last, it seems to squeak past the bar for practical use.

Questions and answers finally line up, and it already feels useful enough to be better than not having it

So we went ahead and published it as-is. We'll keep tuning and improving it from here.

There's a caveat: GPT-5 Thinking isn't available on the free ChatGPT plan right now. You'll need a ChatGPT Plus subscription (about US$20/month) to use it.

Can you still use the VCSSL assistant on the free plan Technically, yes — but in that case the assistant will run on plain GPT-5 (without Thinking). While plain GPT-5 is quite capable for general use, it isn't up to the demands of VCSSL support: you'll see answers not supported by the documentation ("hallucinations") and more confused, non-working code more often.

So for the moment:

That said, the free-plan models on ChatGPT are upgraded fairly often, so this situation may change. If/when the free plan becomes genuinely useful for VCSSL support, we'll announce it here!

That's the overview of the new VCSSL support AI.

From here, let's actually try it — ask it some questions and see how it does!

First, sign in to ChatGPT. For the reasons explained above, using a ChatGPT Plus account is highly recommended You can *use* the free plan (with quality trade-offs).

Once signed in, open the page below to chat with the VCSSL Assistant:

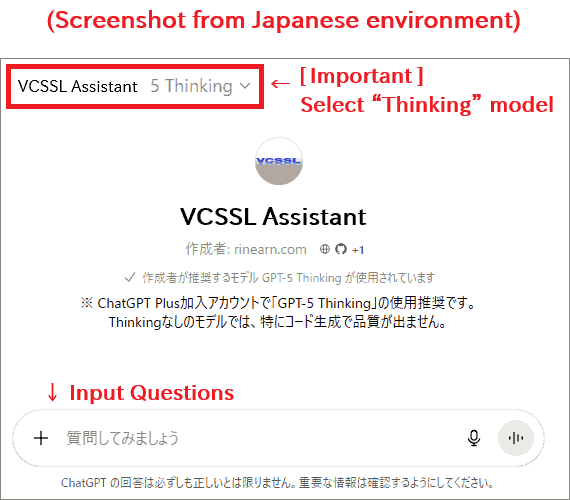

At the top of the chat view, you can choose the underlying AI model the VCSSL Assistant uses.

As of August 2025, the main options (all GPT-5 series) are:

There's also Auto, which switches between Thinking and Fast depending on the difficulty of the question.

In general, select the "Thinking" model here. As noted earlier, the VCSSL Assistant has to do fairly demanding work; without Thinking, things tend to fall apart and the experience degrades quickly.

Once you've chosen a model, type a question into the input box at the bottom.

Let's start with the basics: "What is VCSSL, anyway?"

User:

Hello! What is VCSSL?

(Processing time: 1m16s)

AI:

Hello!

VCSSL is a script language with a simple, C-like syntax for quickly building

lightweight numerical computation/visualization and small GUI tools. It's

designed to keep the learning cost low, so if you're used to C-style syntax,

it's especially approachable.

It originally began as a simple scripting language for a calculator app, so it has the flavor of "a C-like language that could even run on a calculator."

At a glance:

You can run this right away (file extension: .vcssl):

This "Hello, World!" is written the same way in both VCSSL and Vnano; you just switch the file extension between .vcssl and .vnano.

If you're curious, try starting with ÅgGUI / 2D / 3DÅh to get a feel for it. I'm happy to help with use-case ideas or walk through samples!

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

That's a solid, on-point answer.

In this way, it will respond to a wide range of questions you ask.

One thing to keep in mind: whatever you ask, you should expect to wait anywhere from a few dozen seconds to a few minutes.

VCSSL is a very niche programming language, so the base model doesn't really have much prior knowledge about it.

So:

In short, it "learns VCSSL from scratch each time." That's a lot of work.

On top of that:

That's nontrivial, and it requires slow, careful reasoning (hence the need for the Thinking model).

Because it has to do all of that for each question, it takes time. If it feels slow, please picture the AI slogging through the steps above — and give it a moment.

From here, let's ask about a few different topics to get a feel for how it responds.

Let's start simple — ask something basic about language features:

User:

Hello! What numeric types does VCSSL have?

(Processing time: 1m42s)

AI:

Hello!

VCSSL provides six "numeric types":

Notes:

If needed, I can also summarize the arithmetic rules for each type and some casting tips — feel free to ask!

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

That's a straightforward, on-target answer.

This information is already covered early in the guides, so it's an easy one to answer — humans can also guess "it's probably in that section of the guide," and they'd be right:

Next, let's try asking about information that's a bit harder to track down.

The VCSSL Assistant can read every page of VCSSL's official programming guides. So when you're in that "I'm sure I saw this in one of the guides somewhere... but where?" situation, it's pretty good at finding the right spot and answering.

For example:

User:

Thanks! Here's my next question.

I want to launch my own executable "test.exe" from VCSSL code. How can I do that? I'd also like to capture its output after launch.

(Processing time: 2m 28s)

AI:

Great! There are two common ways to approach this:

1: If you only need to launch it (simple)

Use the System library's `system` (synchronous) / `exec` (asynchronous). System is auto-loaded, so you don't need an import. For path handling, use `File.getFilePath` to avoid failures.

// Converting to an absolute path via File.getFilePath is recommended

import File;

string exe = getFilePath("test.exe");

int status = system(exe, "arg1", "arg2"); // waits; returns exit status (synchronous)

int pid = exec(exe, "arg1", "arg2"); // returns process ID (asynchronous)

Note: This approach cannot directly capture stdout/stderr. If you want output, use the Process library below.

If you also want to capture output (Process library)

With Process, you can control stdin/stdout/stderr, the working directory, text encoding, and more.

Main functions you'll use

Example: launch `test.exe` and capture all output

import File;

import Process;

// 1: Prepare the absolute path and (optionally) arguments as an array

string exe = getFilePath("test.exe");

string cmd[] = { exe /*, "arg1", "arg2" */ };

// 2: Create the process (not running yet)

int pid = newProcess(cmd);

// (Optional) Set working directory to the folder containing the exe (must be done before start!)

string exeDir = getFilePath(exe, PARENT); // (getFilePath with PARENT)

setProcessWorkingDirectory(pid, exeDir); // (required before start)

// (Optional) Match encodings (e.g., "CP932" for Japanese Windows; many environments use "UTF-8")

// setProcessOutputEncoding(pid, "CP932");

// setProcessErrorEncoding(pid, "CP932");

// 3: Start execution

startProcess(pid);

// (Optional) For interactive tools, send stdin (a trailing newline is often required Å® use EOL)

setProcessInput(pid, "hello" + EOL); // (EOL is the newline constant)

// 4) Wait for completion

waitForProcess(pid);

// 5) Collect outputs and the exit code

string out = getProcessOutput(pid);

string err = getProcessError(pid);

int code = getProcessExitValue(pid);

// Display

println("exit=" + code);

println("stdout:\n" + out);

println("stderr:\n" + err);

With this, you've got the full flow: launch `test.exe` → capture its output.

If needed, you can also poll `getProcessOutput(pid)` periodically (without waiting) and process logs incrementally.

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

Nice — the answer came back with a fairly long code sample and solid detail.

By the way, this code does run. You'll get a warning in one part where the newline is hard-coded as "\n" (you should use EOL instead), but it executes as-is.

The information behind this answer lives among a large set of pages in the official VCSSL docs — specifically:

The AI correctly located both sources, understood them, and answered accordingly. It's clearly drawing from both (I authored both pages myself).

Next up: the VCSSL Assistant can read every page of the VCSSL standard library references when needed.

So if you're thinking, "What was the exact spec of that function again?", you can just ask — and it will look it up for you.

Let's try:

User:

Thanks! Here's my next question.

I want to place a 3D model on a coordinate system. Which functions should I call, and in what order?

I remember the argument order being a bit confusing.

(Processing time: 47s)

AI:

Great question! When you "place a model on a coordinate system," the common source of confusion is the

argument order for the mount* functions. Here are the essentials first:

Minimal code example (place in both world and a local coordinate)

import graphics3d.Graphics3DFramework;

import Graphics3D;

int local, axisWorld, axisLocal, box;

void onStart(int rendererID) {

setWindowSize(800, 600);

setBackgroundColor(0, 0, 0, 255);

// 1: Create a local coordinate and mount it on the world (child → renderer)

local = newCoordinate();

mountCoordinate(local, rendererID); // place coordinate [child, renderer]

// 2: Place a model in the world (model → renderer)

axisWorld = newAxisModel(3.0, 3.0, 3.0);

mountModel(axisWorld, rendererID);

// 3: Place a model on the local coordinate (model → renderer → coordinate)

axisLocal = newAxisModel(1.5, 1.5, 1.5);

mountModel(axisLocal, rendererID, local);

// 4: Example: place a box on the local coordinate, then move it a bit

box = newBoxModel(1.0, 1.0, 1.0);

mountModel(box, rendererID, local); // mounted on the local coordinate

moveModel(box, 0.5, 0.0, 0.0); // translation (model → dx → dy → dz)

}

Again: how to remember the argument order

If you'd like, I can also summarize the call patterns for rotation/spin/positioning

(e.g., `rotCoordinateZ` / `spinCoordinateZ` / `setCoordinateLocation`).

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

This answer clearly draws not only on the library reference but also on the 3D graphics section of the official programming guide, and combines them coherently.

That's helpful as-is, but this time let's focus more narrowly on the library reference itself:

User:

Thanks! This is exactly the function I wanted to ask about.

Could you give me the detailed specification for this function?

mountModel( modelID, rendererID, coordinateID )

(Processing time: 49s)

AI:

Got it. Here's an accurate, to-the-point summary of the official spec for mountModel( modelID, rendererID, coordinateID ).

Related (easy to mix up)

Minimal usage example

import graphics3d.Graphics3DFramework;

import Graphics3D;

void onStart(int rendererID) {

int coord = newCoordinate();

mountCoordinate(coord, rendererID); // place the coordinate in the world

int axis = newAxisModel(1.5, 1.5, 1.5);

mountModel(axis, rendererID, coord); // place the model on *that* coordinate

}

(Point of comparison: the call/argument order between mountCoordinate and mountModel — model → renderer → destination coordinate.)

If you'd like, I can also summarize nearby APIs such as placing on the world, or translating/rotating after placement.

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

Perfect — that's exactly the kind of answer and summarization we were hoping for.

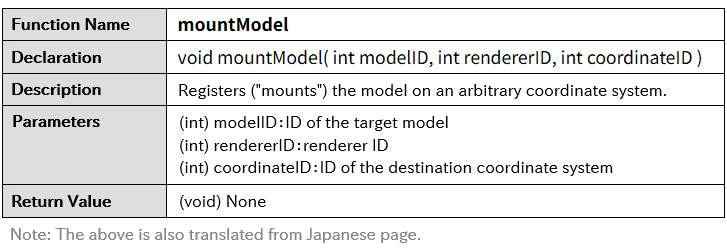

For reference, here's an excerpt from the actual library spec at the relevant section:

The red-boxed portion in the previous answer clearly came from this page.

Personally, the library references are the VCSSL docs I consult most often, so having the assistant surface details at this level of granularity looks genuinely useful.

So far we've focused on Q&A. From here, let's shift to having it write code that actually works as requested.

First, let's ask for a super-simple GUI app:

User:

Could you write VCSSL code that does the following? Thanks!

(Processing time: 1m54s)

AI:

Sounds good — let's do it.

The VCSSL code below creates a window with buttons "A" and "B" and a label.

Pressing A shows "Hello, there!"; pressing B shows "Who pressed B?!".

coding UTF-8;

import GUI;

// Create the window

int window = newWindow(100, 100, 340, 160, "Button Sample");

// Label for displaying text (initially empty)

int messageLabel = newTextLabel(20, 20, 300, 24, "");

// Buttons A / B

int buttonA = newButton(20, 70, 120, 40, "A");

int buttonB = newButton(200, 70, 120, 40, "B");

// Mount components on the window

mountComponent(messageLabel, window);

mountComponent(buttonA, window);

mountComponent(buttonB, window);

// Button-click handler

void onButtonClick(int id, string label) {

if (id == buttonA) {

setComponentText(messageLabel, "Hello, there!");

} else if (id == buttonB) {

setComponentText(messageLabel, "Who pressed B?!");

}

}

// Exit when the window is closed

void onWindowClose(int id) {

exit();

}

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

It produced code. The snippet is short, so writing it isn't too hard.

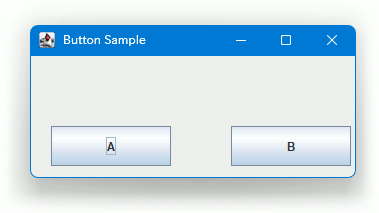

The question is whether a proper window appears and everything behaves correctly. Let's run it:

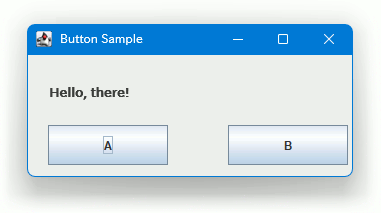

The window launches with buttons A and B. Pressing A...

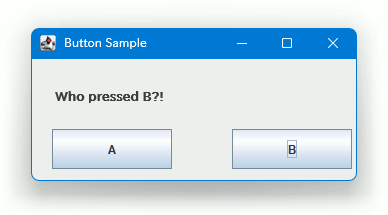

...shows "Hello, there!". Then pressing B...

...shows "Who pressed B?!". That's exactly the requested behavior.

Honestly, I expected the layout to be a bit off, but it turned out surprisingly clean — "good enough" for this purpose — despite the AI not being able to run the code or see the UI.

Not long ago, models tended to have zero visual intuition, so buttons would end up in baffling places. It turns out that progress in this area — visual intuition — has been much faster than I expected.

Alright, let's move on.

VCSSL also serves as the scripting language for automating and controlling RINEARN's graphing software, RINEARN Graph. In fact, We suspect most VCSSL users primarily use it for controlling RINEARN Graph.

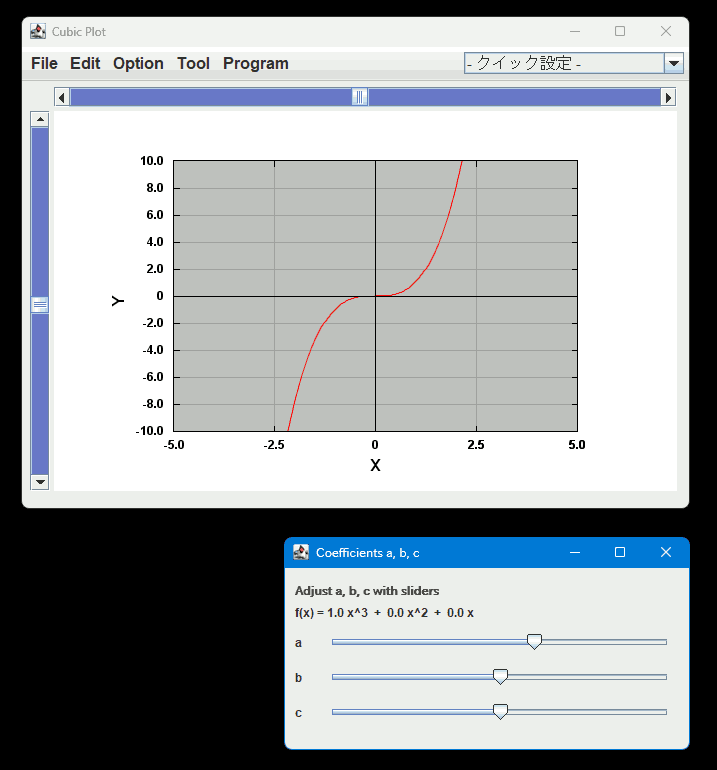

So let's combine VCSSL's GUI features with graph control and have it build a small tool that actually does something useful:

User:

Thanks, that was perfect! Next, could you write code like this?

(Processing time: 2m41s)

AI:

Sure! Here's a sample that plots the cubic y = a x^3 + b x^2 + c x

on a 2D graph, with a GUI containing three sliders to adjust a, b, and c.

It should run as-is (copy & paste).

// coding: UTF-8

import GUI;

import Math;

import tool.Graph2D; // 2D graph control

// ---- Graph settings ----

const int N = 400; // number of points

const double X_MIN = -5.0;

const double X_MAX = 5.0;

const double Y_MIN = -10.0;

const double Y_MAX = 10.0;

// Coefficients (initial values)

double a = 1.0;

double b = 0.0;

double c = 0.0;

// ---- Global handles ----

int graph; // 2D graph ID

double xData[ N ];

double yData[ N ];

int window; // GUI window

int sliderA, sliderB, sliderC; // slider IDs

int infoLabel; // label for showing parameters

// ---- Compute & plot ----

void plotCubic() {

// place x at equal intervals, compute y = a x^3 + b x^2 + c x

double dx = (X_MAX - X_MIN) / (N - 1);

for (int i=0; i<N; i++) {

double x = X_MIN + dx * i;

xData[i] = x;

yData[i] = a*x*x*x + b*x*x + c*x;

}

// send data to the graph

setGraph2DData(graph, xData, yData);

}

// ---- Update the info label ----

void updateInfo() {

string text =

"f(x) = "

+ round(a, 3, HALF_UP) + " x^3 + "

+ round(b, 3, HALF_UP) + " x^2 + "

+ round(c, 3, HALF_UP) + " x";

setComponentString(infoLabel, text);

paintComponent(infoLabel);

paintComponent(window);

}

// ---- Initialization (top-level code runs once on startup) ----

// Launch and configure the 2D graph

graph = newGraph2D( 0, 0, 800, 500, "Cubic Plot" );

setGraph2DAutoRange( graph, false, false );

setGraph2DRange( graph, X_MIN, X_MAX, Y_MIN, Y_MAX );

setGraph2DOption( graph, "WITH_POINTS", false );

setGraph2DOption( graph, "WITH_LINES", true );

plotCubic();

// Create the GUI window

window = newWindow( 820, 20, 420, 220, "Coefficients a, b, c" );

// Title and current values

int titleLabel = newTextLabel( 10, 10, 380, 18, "Adjust a, b, c with sliders" );

mountComponent( titleLabel, window );

infoLabel = newTextLabel( 10, 35, 380, 18, "" );

mountComponent( infoLabel, window );

updateInfo();

// Slider for a

int labelA = newTextLabel( 10, 65, 30, 18, "a" );

mountComponent( labelA, window );

sliderA = newHorizontalSlider( 40, 65, 350, 20, a, -5.0, 5.0 );

mountComponent( sliderA, window );

// Slider for b

int labelB = newTextLabel( 10, 100, 30, 18, "b" );

mountComponent( labelB, window );

sliderB = newHorizontalSlider( 40, 100, 350, 20, b, -5.0, 5.0 );

mountComponent( sliderB, window );

// Slider for c

int labelC = newTextLabel( 10, 135, 30, 18, "c" );

mountComponent( labelC, window );

sliderC = newHorizontalSlider( 40, 135, 350, 20, c, -5.0, 5.0 );

mountComponent( sliderC, window );

// Redraw the window at the end

paintComponent( window );

// ---- Event handler ----

void onSliderMove(int id, float value) {

if (id == sliderA) { a = value; }

if (id == sliderB) { b = value; }

if (id == sliderC) { c = value; }

updateInfo();

plotCubic();

}

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

Whoa — this time the code is quite long and complex...

Will it really run? Let's try it.

We expected a few errors, but it happened to work on the very first try this time (with code this long, it often includes a few mistakes).

The layout looks reasonable, the behavior matches the requirements, and when you move the slider, the graph updates in lockstep. This could honestly be used as a teaching example as-is.

By the way, you can right-click here to download the code and try it yourself.

A quick aside: personally, I think it went "a bit too smoothly on the first try," so let me add some context.

This assistant has access to the entire VCSSL Code Archive — our collection of sample code and explanations — and can read any article as needed. Looking through that content, there are several entries that are highly relevant — for example, this one is a typical match.

So in this particular case, it likely benefited from those materials and wrote good code more smoothly than I expected. In general, at this difficulty level you can expect a bit more struggle — some joint debugging, or revisiting the design.

That said, the way it adapts existing examples and turns them into a coherent solution for a different task is pleasantly surprising. If that's possible, then by enriching the Code Archive on our side, the assistant effectively "gets smarter on its own," which is motivating — almost like a new kind of training game.

Note: I wrote all code in the VCSSL Code Archive, and they're published under CC0 (effectively public-domain). There are no copyright concerns about code the AI produces by drawing on them.

Let's keep going (this is fun).

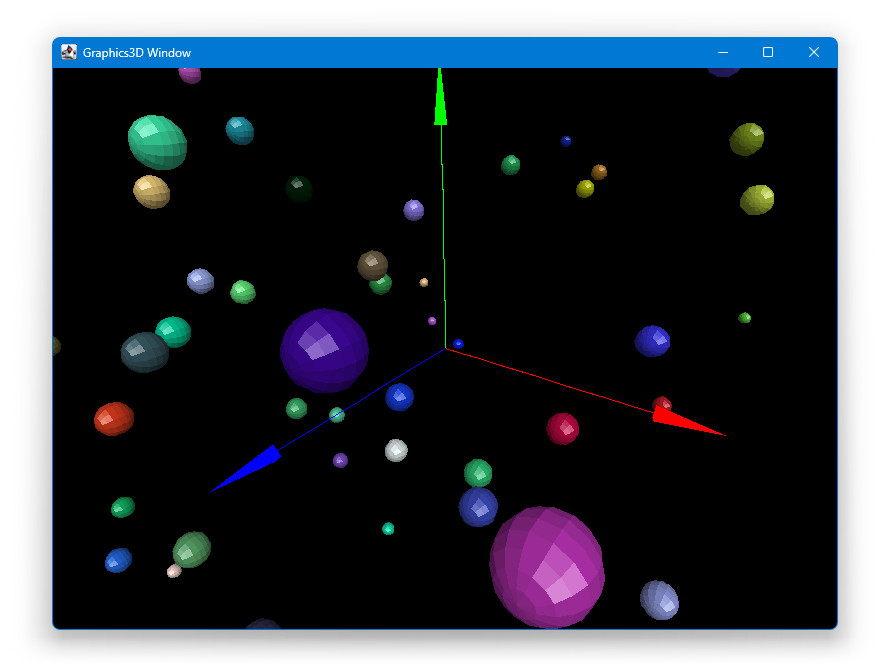

This time we'll ask it to write some 3D rendering code:

User:

Thanks!!! Let's move on. Could you write code that does the following?

(Processing time: 1m 37s)

AI:

Absolutely. Here's a sample that places 100 spheres with random positions and random colors in 3D space, using VCSSLÅfs standard 3D framework.

coding UTF-8;

import graphics3d.Graphics3DFramework;

import Graphics3D;

import Math; // using random()

const int N = 100; // number of spheres

void onStart (int rendererID) {

// Window / background

setWindowSize(800, 600);

setBackgroundColor(0, 0, 0, 255);

// Axes as a reference

int axis = newAxisModel(5.0, 5.0, 5.0);

mountModel(axis, rendererID);

// Generate random spheres

for (int i = 0; i < N; i++) {

// Random radius (same for X/Y/Z), roughly in [0.15, 0.40]

float r = 0.15 + 0.25 * random();

int sphere = newSphereModel(r, r, r, 14, 10);

// Random color components in [0, 255)

// (implicit float → int conversion on assignment)

int R = 255 * random();

int G = 255 * random();

int B = 255 * random();

setModelColor(sphere, R, G, B, 255);

// Mount first

mountModel(sphere, rendererID);

// Then move to a random position in roughly [-10, +10] on each axis

float x = 20.0 * random() - 10.0;

float y = 20.0 * random() - 10.0;

float z = 20.0 * random() - 10.0;

moveModel(sphere, x, y, z);

}

}

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

Looking at the result, maybe this was a bit too easy.

Let's run it:

It ran on the first try. The behavior matches the requirements — guess it really was on the easy side.

Let's make things a bit harder.

To be honest, I'm kind of hoping it slips up here — I want to show how we'd debug together.

User:

Thanks!

Could you make those spheres "jitter" in place — like Brownian motion?

(Processing time: 2m 48s)

AI:

Great idea! If you want each sphere to "wiggle in place," the simplest and safest approach is to mount each sphere on its own local coordinate system,

then jitter that origin a tiny bit with randomness every frame.

Since onUpdate is called dozens of times per second, you can update coordinates there. Use setCoordinateLocation to set the origin directly. For randomness, use random() (in [0.0, 1.0)).

coding UTF-8;

import Math;

import Graphics3D;

import graphics3d.Graphics3DFramework;

// Number of spheres

const int N = 100;

// Model IDs and coordinate-system IDs for each sphere

int sphere[N];

int coord [N];

// Base (initial) position per sphere, plus displacement/velocity for the jitter

float baseX[N], baseY[N], baseZ[N];

float dx[N], dy[N], dz[N];

float vx[N], vy[N], vz[N];

// Parameters (tweak to taste)

const float SPACE_RANGE = 10.0; // initial placement range (±)

const float JIT_ACCEL = 0.06; // strength of random acceleration

const float FRICTION = 0.92; // damping (0.0 - 1.0; 1.0 = no damping)

const float JIT_LIMIT = 0.6; // max jitter amplitude from base positionÅi±Åj

const int SEG_U = 14; // sphere tessellation

const int SEG_V = 10;

void onStart(int rendererID) {

// Make colors pop a bit (brighter ambient light)

setAmbientLightBrightness(1.0);

setWindowSize(900, 600);

setBackgroundColor(0, 0, 0, 255);

// Axes as a visual reference

mountModel(newAxisModel(6.0, 6.0, 6.0), rendererID);

// Create N spheres, each on its own coordinate system

for (int i=0; i<N; i++) {

// 1) Create a local coordinate for this sphere and mount it in the world

coord[i] = newCoordinate();

mountCoordinate(coord[i], rendererID); // create & mount the coordinate

// 2) Pick a random base position and set it

baseX[i] = SPACE_RANGE * (2.0*random() - 1.0);

baseY[i] = SPACE_RANGE * (2.0*random() - 1.0);

baseZ[i] = SPACE_RANGE * (2.0*random() - 1.0);

setCoordinateLocation(coord[i], baseX[i], baseY[i], baseZ[i]); // set origin directly

// 3) Create the sphere model, give it a random color, and mount it on the coordinate

float r = 0.15 + 0.25*random(); // slightly random radius

sphere[i] = newSphereModel(r, r, r, SEG_U, SEG_V);

int R = int(255 * random());

int G = int(255 * random());

int B = int(255 * random());

setModelColor(sphere[i], R, G, B, 255);

mountModel(sphere[i], rendererID, coord[i]); // mount sphere on its coordinate

// Displacement & velocity start at zero (arrays default to 0, explicit is fine)

dx[i]=dy[i]=dz[i]=0.0;

vx[i]=vy[i]=vz[i]=0.0;

}

}

void onUpdate(int rendererID) { // called dozens of times per second

for (int i=0; i<N; i++) {

// Random acceleration + damped velocity (gives a "jittery" feel)

vx[i] = FRICTION * vx[i] + JIT_ACCEL * (2.0*random() - 1.0);

vy[i] = FRICTION * vy[i] + JIT_ACCEL * (2.0*random() - 1.0);

vz[i] = FRICTION * vz[i] + JIT_ACCEL * (2.0*random() - 1.0);

dx[i] += vx[i];

dy[i] += vy[i];

dz[i] += vz[i];

// Clamp the jitter amplitude relative to the base position

if (dx[i] > JIT_LIMIT) dx[i] = JIT_LIMIT;

if (dx[i] < -JIT_LIMIT) dx[i] = -JIT_LIMIT;

if (dy[i] > JIT_LIMIT) dy[i] = JIT_LIMIT;

if (dy[i] < -JIT_LIMIT) dy[i] = -JIT_LIMIT;

if (dz[i] > JIT_LIMIT) dz[i] = JIT_LIMIT;

if (dz[i] < -JIT_LIMIT) dz[i] = -JIT_LIMIT;

// Set the origin to (base + jitter)

setCoordinateLocation(coord[i], baseX[i]+dx[i], baseY[i]+dy[i], baseZ[i]+dz[i]);

// Example of directly controlling the origin inside onUpdate

}

}

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

Hmm, this is more compact and cleaner than I expected. Maybe that was too easy.

Let's run it:

Oh, nice — an error!

I was kind of hoping for one, to be honest. Perfect timing.

When you see an error like this, just copy and paste the message to the assistant:

User:

Thanks! But I get the following error:

---

Syntax Error

[ Location ] around line 55

[ Content ] int R = int(255 * random())

[ Details ] Attempting to call an undeclared function "int".

(Processing time: 3m 27s)

AI:

Whoops — sorry! In VCSSL, "int(...)" is parsed as a function call, so it's treated as a call to a non-existent function named `int`, which triggers that error.

For explicit casting in VCSSL, use the C-style `(int)expr` form (e.g., `print( (int)2.883 );`).

There's also implicit conversion on assignment:

assigning a real to an `int` variable truncates the fractional part (e.g., `int i = 2.883; // becomes 2`).

So either of the following is fine for generating RGB values:

Also, `setModelColor` expects integers in 0-255 for RGBA (the first argument is the model ID, followed by R, G, B, alpha), so either approach works well.

Fixed full code (random placement + Brownian jitter)

import graphics3d.Graphics3DFramework;

import Graphics3D;

import Math; // random()

...

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

That's how it investigates the cause and proposes a fix.

In this run there were two more small errors. Once you cross a certain line of difficulty/complexity, mistakes tend to pop up — likely because there's less headroom in the modelÅfs working context.

Still, pasting each error back like above eventually got everything fixed (though with very hard issues, it can fail to fix them).

Now for the result:

Nice — exactly as requested, the spheres are jittering in place!

One caveat: each fix can take a few minutes, so if you already know the cause, it's usually faster to patch it yourself. You'll often hit the next error right after, and waiting "a few minutes per round" adds up.

On the other hand, as a quick way to draft a working foundation in a few minutes, this is pretty handy. If you let the AI sketch the scaffolding and then have a human do the final polish and debugging, it can be a productive split of work.

So far we've looked at promising results from the VCSSL Assistant.

But as anyone who uses AI regularly knows, there's a point where:

Knowing that — and keeping your requests inside that limit line — is essential for practical use.

To wrap up, let's look at a case that was too difficult.

We asked for a fairly challenging fluid simulation:ÅF

User:

For the next request, could you write code that:

(Processing time: 2m 46s)

AI:

Sounds good — let's try it.

Below is VCSSL code that constructs a simple "vortical (incompressible) vector field," then advects particles through it and displays the result in real time.

The vector field is built by composing multiple sine waves into a stream function ψ, then taking (u, v) = (∂ψ/∂y, -∂ψ/∂x).

That produces "turbulent-looking" vortices in both appearance and motion (it isn't a strict Navier-Stokes solution, but it's lightweight and animates nicely).

Execution runs through the 2D framework's onStart / onUpdate / onPaint callbacks (the framework calls them dozens of times per second).

Use setGraphics2DColor and clearGraphics2D for background color and clearing; use drawPoint / drawLine for drawing.

If needed, setFrameRate specifies the target FPS. Math (sin, cos) comes from the Math library. Initialize randomness with random().

coding UTF-8;

// ==== Libraries ====

import Graphics;

import Graphics2D;

import GUI;

import Math;

// ==== Screen & simulation settings ====

const int WIDTH = 900;

const int HEIGHT = 600;

const int NPART = 6000; // number of particles (reduce if heavy)

const int NMODE = 5; // number of sine-wave modes for ψ

const float DT = 1.0/60.0; // simulation time step (sec-equivalent)

const int LINE_W = 1; // stroke width

// ==== Particle state ====

float px[NPART], py[NPART]; // current position

float ppx[NPART], ppy[NPART]; // previous position (for trails)

// ==== Mode coefficients for stream function ψ ====

float kx[NMODE], ky[NMODE]; // wave vectors [rad/pixel]

float omega[NMODE]; // angular speeds [rad/sec]

float amp[NMODE]; // amplitude scale for ψ

float phase[NMODE]; // initial phase

...

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

As you can see, instead of saying "can't," it bravely produced a long, intricate program.

Honestly, it would help if it said "This is probably beyond me right now," but today's AIs tend to cheerfully say "you got it!" no matter what (endearing, but risky).

My hunch is that current models don't have a strong sense of their own capability boundary — whether a task is comfortably doable or likely out of reach. That's a key difference from humans, and something to watch out for when using AI.

When we tried to run the code, a cascade of small, syntax-level mix-ups popped up — likely a sign that the taskÅfs difficulty was pushing the model right to the edge of its workingÅfcapacity.

We fixed them one by one by hand and finally got it to run, but the screen stayed black with nothing drawn (internally it seemed to be doing "something-ish," but no visible output).

So the impression remains: asking it to build a complex simulation end-to-end on its own is still tough right now. If/when that barrier falls, it'll be a big win — but for now, it's a future goal.

In trying various harder tasks, I noticed a few recurring error patterns:

My guess is that for difficult problems the model thinks very deeply, which stretches its working context. As that context gets tight, fine-grained VCSSL rules fall out of focus, or there just isn't enough bandwidth left to check them carefully.

I also often saw cases like:

On short snippets it tends to check the function specs and catch this, but the longer and harder the code, the more frequently it slips.

Overall, as code grows longer and more complex, checks on syntax and function specs get looser, and lots of small mistakes start popping up.

Given that, the current practical ceiling for coding tasks seems to be things like:

To wrap up the coding tests, letÅfs end on something a bit silly.

Modern conversational AIs like ChatGPT are, well, creatures of words, so their visual/spatial imagination is supposedly weak — though it's been improving fast lately.

So if we ask it to imagine a complex 3D shape and build something... surely it'll produce something amusing, right?

Let's try: we'll ask for a 3D dolphin.

User:

Thanks! Okay, next: please draw a realistic dolphin in 3D!

Processing time: 4m 21s)

AI:

Great idea! I'll hand-build a mesh that looks "semi-realistic," add a swimming motion, and display it.

(VCSSL's 3D uses Z-sorting and flat shading, and doesn't support textures, so photorealism is limited — shape will have to do the heavy lifting.)

I'll assemble a QUADRANGLE_GRID for the body (surface of revolution with some bend), pectoral and dorsal fins (thin plates),

and the tail fluke (a horizontal thin plate), and update each frame via `setModelVertex(..., QUADRANGLE_GRID)`.

To render the thin plates from both sides, I'll disable culling. I'll mirror the left/right pectorals and correct front/back.

I'll also tweak diffuse/specular/ambient coefficients a bit. (Window and background follow the guide setup.)

coding UTF-8;

import Math;

import Graphics3D;

import graphics3d.Graphics3DFramework;

// ===== Resolution / dimensions =====

const int U_BODY = 80; // body: longitudinal segments

const int V_BODY = 24; // body: around the circumference

const int U_FIN = 18; // fin: root → tip

const int V_FIN = 4; // fin: thickness direction (thin → small)

const int U_TAIL = 16; // tail: root → tip

const int V_TAIL = 20; // tail: span (left ↔ right)

...

Note: The conversation shown here is an English translation of an exchange originally conducted in Japanese. Results may differ slightly when interacting in English.

After about four and a half minutes of thinking, it produced a very long program.

When we ran it, the usual sprinkling of small errors appeared — but we fixed them with giddy anticipation. What glorious (or questionable) creature is about to be born...?

And then — it finally ran:

...hmm. ...I mean, it's more impressive than I expected? But it's definitely not a dolphin. Still, it's a lot less chaotic than what I imagined. And yet... I'm honestly not sure what to say about this one.

As is often the case with AI topics, this turned into a very long post — but that's it for todayÅfs update.

We went back and forth on whether to release the VCSSL Assistant now or keep it on ice a little longer. It's likely helpful at the intro + a bit level, but it still can't reliably handle long, complex programs...

Then I revisited our post from a year ago about the RINEARN Graph 3D Assistant, and the pace of progress over just one year really struck me. Thanks to model advances, what was the ceiling back then is the floor now, and today's ceiling would've sounded like sci-fi a year ago.

Given that AI models are evolving at a pace where a single year can change everything, we decided that if it just barely clears the bar today, it's better to ship and learn. So we're releasing it — rough edges and all — while keeping an eye on the road ahead.

You may still run into moments that make you sigh. But we're confident it will grow into a dependable partner, and we'd be grateful if you could give it a little patience in the meantime.

We'll keep sharing updates here about the VCSSL Assistant and our broader AI efforts. Stay tuned!